OpenAI Gym for NES games + DQN with Keras to learn Mario Bros. from raw pixels

- An EXPERIMENTAL openai-gym wrapper for NES games.

- With a Double Deep Q Network to learn how to play Mario Bros. game from 1983.

Installation

You can use a

virtualenvor apipenvif you want to install the dependencies in an isolated environment.

- Use Python 3 only.

- Install openai-gym and keras with tensorflow backend (with

pip), andcv2(OpenCV module, on Debian/Ubuntu,sudo pip install opencv-python, see this SO question). - Install the

fceuxNES emulator and make surefceuxis in your$PATH. In Debian/Ubuntu, you can simple usesudo apt install fceux. Version 2 at least is needed. - Find a

.nesROM for Mario Bros. game (any dump for the Nintendo NES will do). Save it tosrc/roms/mario_bros.nes. - Copy state files from

roms/fcs/*to your~/.fceux/fcs/(faster loading for the beginning of the game).

Example usage

For instance to load the Mario Bros. environment:

# import nesgym to register environments to gym

import nesgym

env = gym.make('nesgym/MarioBros-v0')

obs = env.reset()

for step in range(10000):

action = env.action_space.sample()

obs, reward, done, info = env.step(action)

... # your awesome reinforcement learning algorithm is here

Examples for training dqn

An implementation of dqn is in src/dqn, using keras.

You can train dqn model for Atari with run-atari.py and for NES with run-soccer.py or run-mario.py.

Integrating new NES games?

You need to write two files:

- a lua interface file,

- and an openai gym environment class (python) file.

The lua file needs to get the reward from emulator (typically extracting from a memory location), and the python file defines the game specific environment.

For an example of lua file, see src/lua/soccer.lua; for an example of gym env file, see src/nesgym/nekketsu_soccer_env.py.

This website gives RAM mapping for the most well-known NES games, this is very useful to extract easily the score or lives directly from the NES RAM memory, to use it as a reward for the reinforcement learning loop. See for instance for Mario Bros..

Gallery

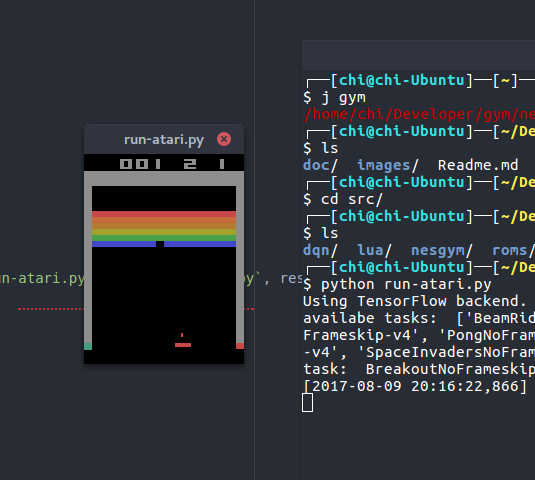

Training Atari games

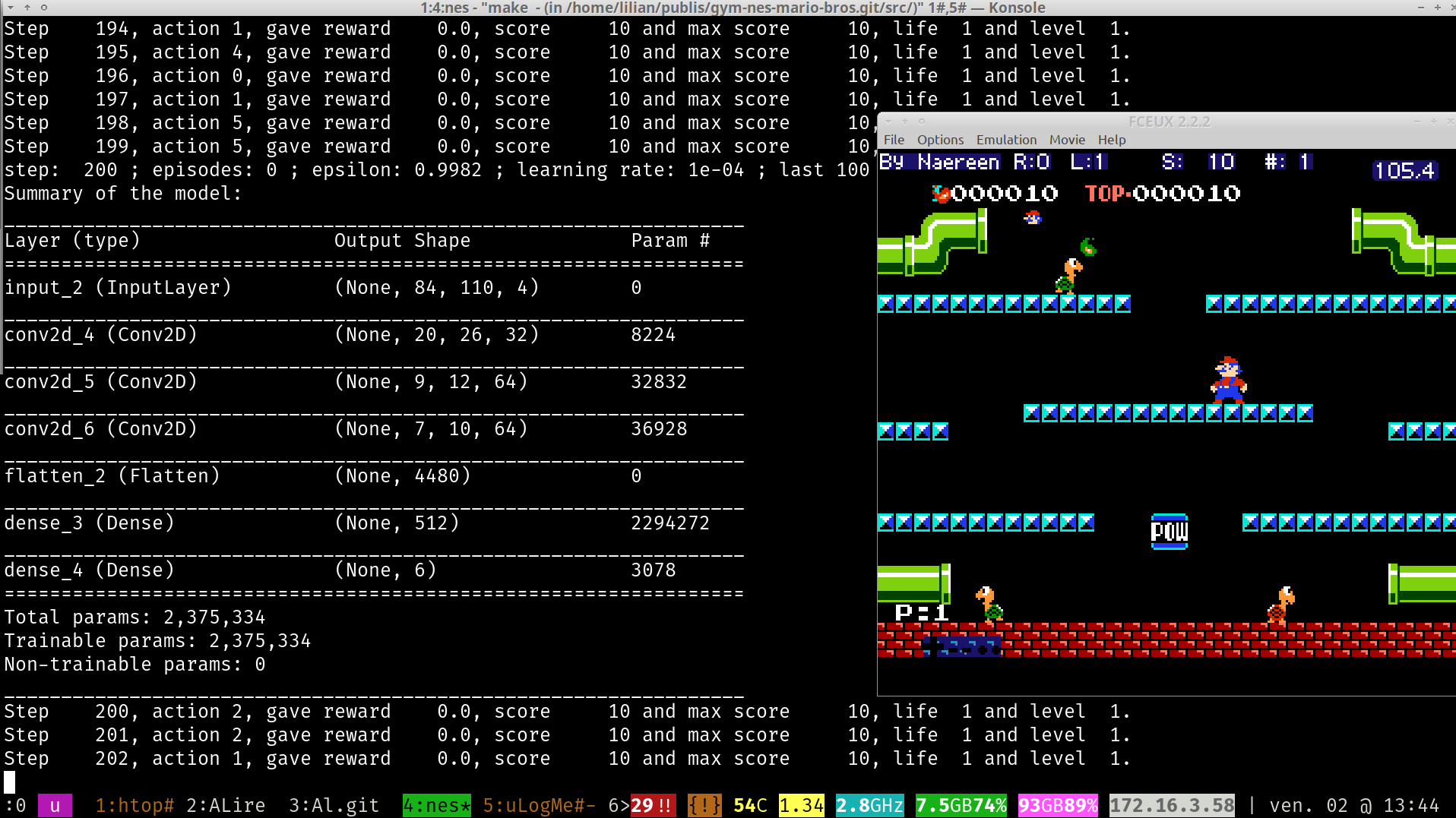

Training NES games

Mario Bros. game

That’s new!

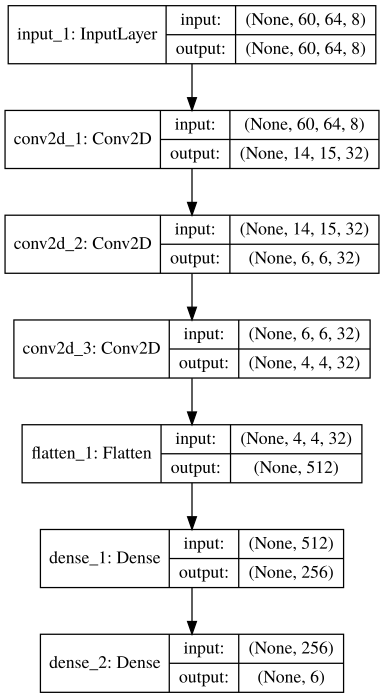

Architecture of the DQN playning mario:

Overview of the experimentation with 3 emulators:

Soccer game

:scroll: License ?

This (small) repository is published under the terms of the MIT license (file LICENSE). © Lilian Besson, 2018.